Bleeding Llama: The Critical Memory Leak in Ollama That Exposes 300,000 AI Servers

Your AI Server Is Leaking Passwords. Three API Calls. Zero Authentication. Meet “Bleeding Llama.”

Imagine a vulnerability so elegant that an attacker can siphon the entire memory of your AI inference server — every user conversation, every API key, every environment variable — using nothing more than three unauthenticated HTTP requests. No exploits to compile. No zero-day market to browse. Just curl.

Welcome to CVE-2026-7482, a.k.a. “Bleeding Llama” — a critical heap out-of-bounds read vulnerability in Ollama, the open-source tool that’s become the default way to run large language models locally. CVSS score: 9.1. Exposed servers: approximately 300,000. Authentication required: absolutely none.

Discovered by Cyera Research and disclosed on May 5, 2026, this is the kind of vulnerability that makes you reconsider every AI tool you’ve deployed without thinking about the attack surface.

What Is Ollama, and Why Should You Care?

If you’re not familiar: Ollama is an open-source platform that lets you run large language models — Llama, Mistral, Gemma, and dozens of others — directly on your own hardware instead of calling cloud APIs. With 170,000+ GitHub stars, over 100 million Docker Hub downloads, and adoption across enterprises of every size, Ollama has quietly become the standard for self-hosted AI inference.

It’s everywhere. Internal chatbots, code assistants, data analysis tools, customer-facing AI products — if a company is running open-source LLMs, there’s a good chance Ollama is underneath it all.

And here’s the uncomfortable part: many of those deployments are sitting on the open internet, listening on all interfaces, with no authentication whatsoever.

What Happened

The vulnerability lives in Ollama’s GGUF model file processing pipeline — specifically in the WriteTo() and ConvertToF32() functions that handle tensor data during model quantization (the process of converting model weights between precision formats).

Here’s the core problem: Ollama trusts the tensor dimensions declared inside GGUF files without validating them against actual allocated buffer sizes.

An attacker crafts a malicious GGUF file that declares tensor sizes larger than the actual file content. When Ollama processes this file during quantization, it reads past the buffer boundary into adjacent heap memory — which can contain anything from other users’ chat sessions to API keys stored in environment variables.

The clever part? The exploit uses a lossless F16-to-F32 conversion, meaning the leaked heap memory gets encoded into the output model file exactly as it existed — no corruption, no garbage data. Clean extraction.

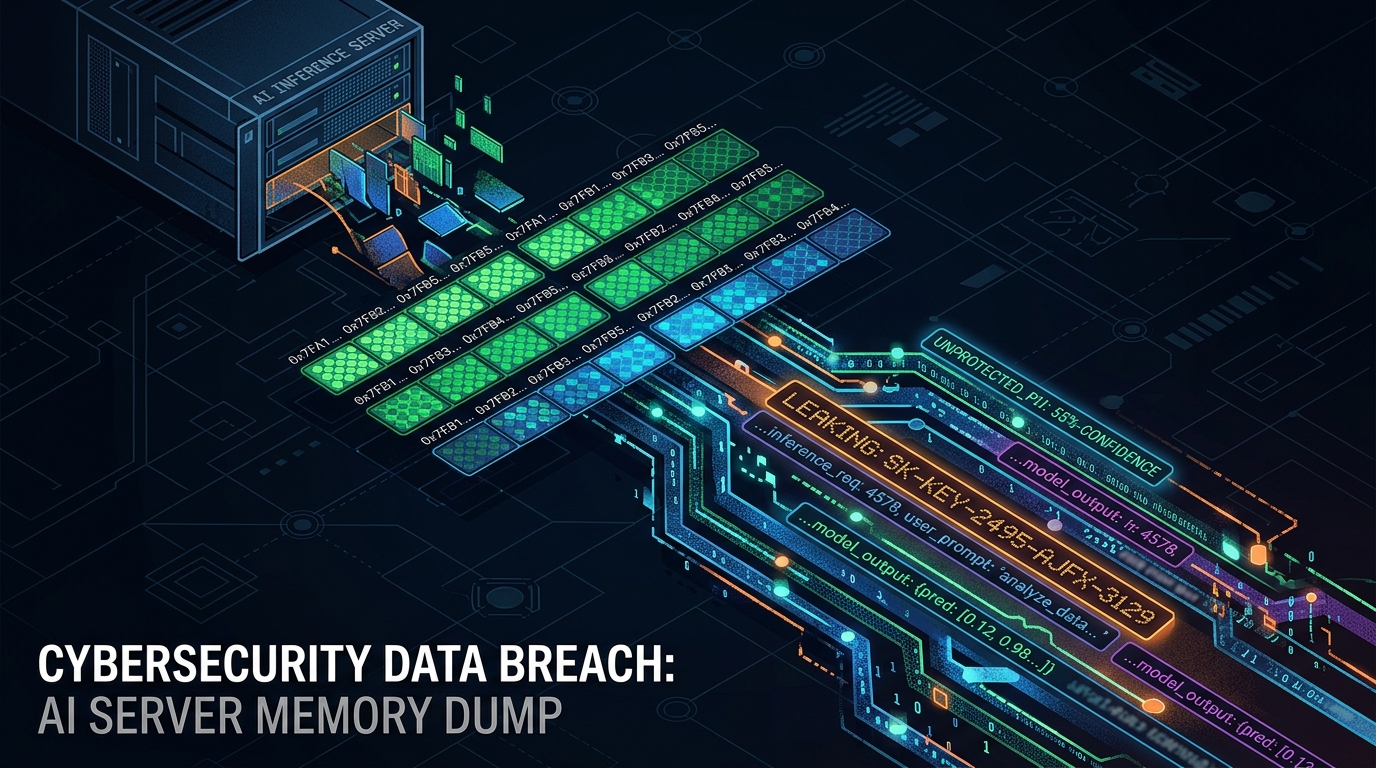

The Three-Step Attack

The entire exploit chain is beautifully simple and requires zero authentication, zero user interaction, and zero privileges:

POST /api/blobs/sha256:<hash>— The attacker uploads their malicious GGUF file to the Ollama server. It’s stored as a blob, no questions asked.POST /api/create— The attacker tells Ollama to create a model from that blob. This triggers the quantization process, which reads past the buffer into heap memory, embedding the leaked data into the new model artifact.POST /api/push— The attacker pushes the resulting model (now containing stolen memory contents) to a registry they control. The data is exfiltrated. Done.

Three API calls. The server never crashes. No alarms go off. The attacker walks away with a dump of the Ollama process memory.

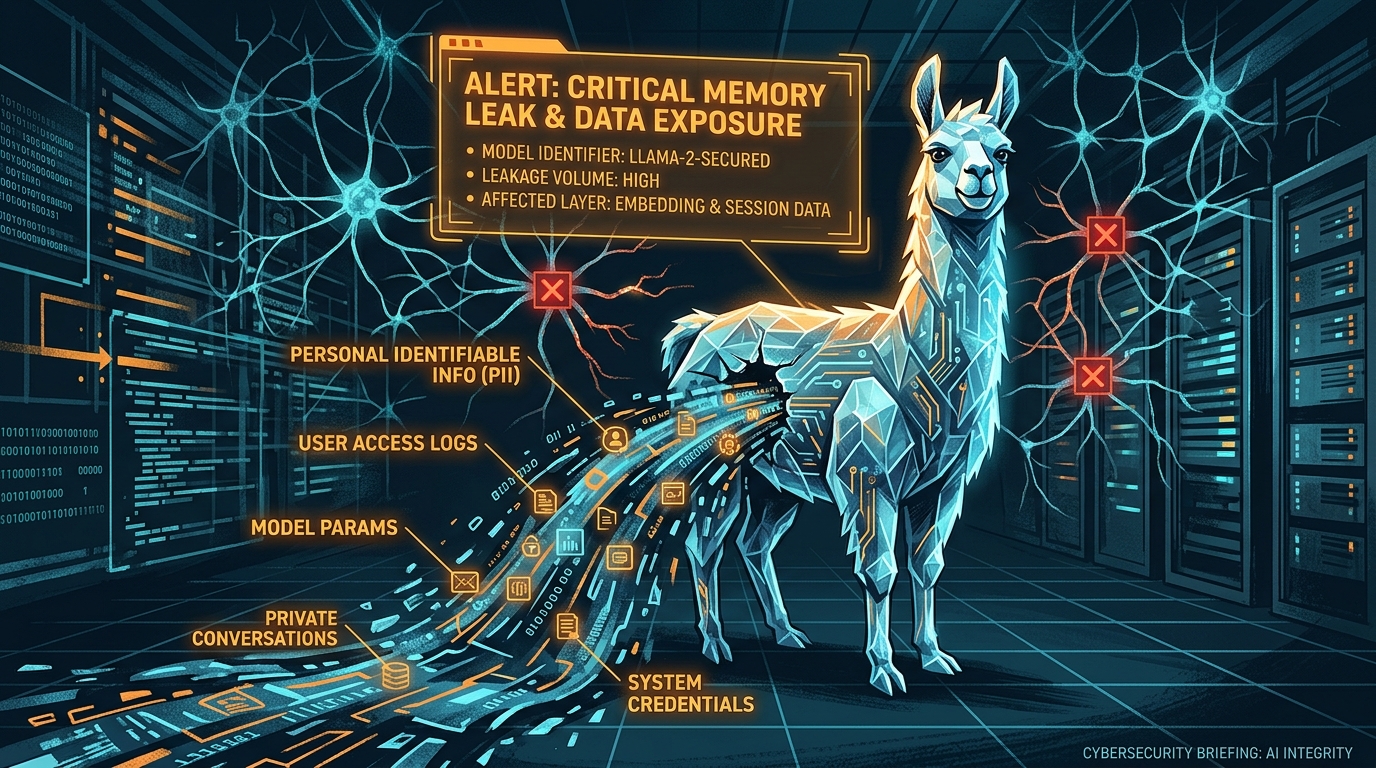

What Gets Leaked

This is where it gets painful. The Ollama process memory can contain:

- User messages and prompts from active and recent sessions — every question typed into the AI, every response generated

- System prompts and configuration data — the instructions that shape how the AI behaves

- Environment variables — which frequently contain API keys, database credentials, authentication tokens, and other secrets

- Fragments of other users’ conversations — on multi-user systems, one attacker can read everyone else’s data

- Model weights and proprietary data — for organizations running custom fine-tuned models

For a company running an internal AI assistant, this could mean every employee’s queries — including sensitive business discussions, code reviews, or strategic questions — are readable by anyone who can reach the server. For an MSP hosting AI services for clients, it means your customers’ data is exposed. For a healthcare or financial services company, this is a compliance nightmare.

The Technical Bit (For the Curious)

A few details worth understanding:

Why is this possible in Go? Go is a memory-safe language — buffer overflows shouldn’t happen. The answer is the unsafe package, which gives developers an escape hatch for low-level memory operations. Ollama uses unsafe in exactly one place: the GGUF tensor processing code. That’s precisely where this vulnerability lives. As Cyera dryly noted, “all the usual safety guarantees go out the window.”

The quantization pipeline: When Ollama processes a model file, it can convert between precision formats (e.g., F16 → F32). For optimization, the conversion always goes through F32 as an intermediate step. The WriteTo() function performs this conversion. But because the source buffer size comes from the attacker-controlled GGUF metadata — not the actual file — Ollama happily reads well past the end of the legitimate data into whatever’s next to it in the heap.

Why lossless matters: The F16-to-F32 conversion is lossless — every bit of the source data is preserved exactly. This means the leaked heap memory isn’t garbled or approximated. It’s a byte-for-byte copy of whatever was sitting in that memory region. The attacker gets clean, usable data.

Stealth: The server doesn’t crash. No panic. No error logs. The memory read is silent. The only trace would be API calls to /api/blobs, /api/create, and /api/push — which are all normal Ollama operations and easy to miss in logs.

Why 300,000 Servers Are Exposed

Ollama’s default configuration binds to 127.0.0.1 (localhost only), which would be safe. But the widely documented approach for deploying Ollama in production — including in the official docs — sets OLLAMA_HOST=0.0.0.0, which opens it up on all network interfaces. Combined with zero built-in authentication on the API, this creates a perfect storm: servers running on port 11434, accessible from the entire internet, requiring no credentials to interact with.

This is a textbook example of secure defaults losing to convenient defaults. The configuration that “just works” for multi-client deployments is also the configuration that exposes your AI server to the world.

The Disclosure Timeline — A Story in Itself

The timeline here is worth noting, because it reveals some friction in the vulnerability reporting process:

- February 2, 2026 — Vulnerability reported to Ollama

- February 25, 2026 — Ollama acknowledges and shares a fix

- v0.17.1 released — Patch shipped, but notably without clear security advisories

- March 2, 2026 — CVE request submitted to MITRE — no response

- April 26, 2026 — Cyera escalates to Echo, a third-party CVE Numbering Authority

- April 28, 2026 — CVE-2026-7482 officially assigned

- May 5, 2026 — Full public disclosure by Cyera Research

Three months from report to public disclosure. The patch was available relatively quickly — but the lack of a clear security advisory from Ollama means many organizations may have updated without realizing they were fixing a critical security vulnerability. If you’re running Ollama and updated to v0.17.1 sometime after February, you’re patched — but you should still check whether your server was exposed before the update.

What You Should Do — Right Now

Immediate actions (today):

- Update Ollama to v0.17.1 or later. This is the patched version. If you’re running anything older, you’re vulnerable

- Check your network exposure. Is port 11434 accessible from the internet? Use

ss -tlnp | grep 11434or check your cloud security group rules. If it’s open, close it - Rotate all credentials that were in the Ollama environment — API keys, tokens, database passwords, anything stored in environment variables

Short-term (1–7 days):

- Audit your logs for suspicious API calls — specifically look for:

- Unusual

/api/blobsuploads (large GGUF files from unknown sources) /api/createcalls from unexpected IPs/api/pushoperations pushing to registries you don’t recognize

- Unusual

- Bind Ollama to 127.0.0.1 if it doesn’t need to be network-accessible. If it does need network access, put it behind a reverse proxy with authentication

- Implement firewall rules restricting access to port 11434 to known, trusted IPs only

Longer term:

- Treat your AI inference server like any other sensitive service. Authentication, encryption, network segmentation, audit logging — the basics you’d apply to a database server apply here too

- Review your AI infrastructure for similar risks. Ollama isn’t the only self-hosted AI tool with a relaxed security posture. If you’re running other model servers (vLLM, TGI, LocalAI), apply the same scrutiny

- Monitor for similar vulnerabilities. The GGUF format is used across the AI ecosystem. Bugs in model file parsing are likely to recur in other tools

The Bottom Line

Bleeding Llama (CVE-2026-7482) is a reminder that “running AI locally” doesn’t mean “running AI securely.” Ollama is a fantastic tool, but its default deployment pattern has been exposing 300,000 servers to a trivially exploitable memory leak that requires no authentication and no special tools. If you’re running Ollama — especially if it’s internet-facing — update to v0.17.1 now, rotate your credentials, and check your logs. The era of treating AI infrastructure as exempt from security basics is over. Your LLM server is a server. Secure it like one.